Optimal Control Problems

Optimal control problems arise when we want to determine a control variable that optimizes an objective function while satisfying physical or dynamical constraints.

These problems appear naturally in engineering, physics, economics and many areas of applied mathematics.

In many applications the goal is to minimize a functional of the form

$$ \min_{u} J(u) $$

subject to a constraint

$$ c(x,u) = 0 $$

where

- (x) represents the state variable

- (u) represents the control variable

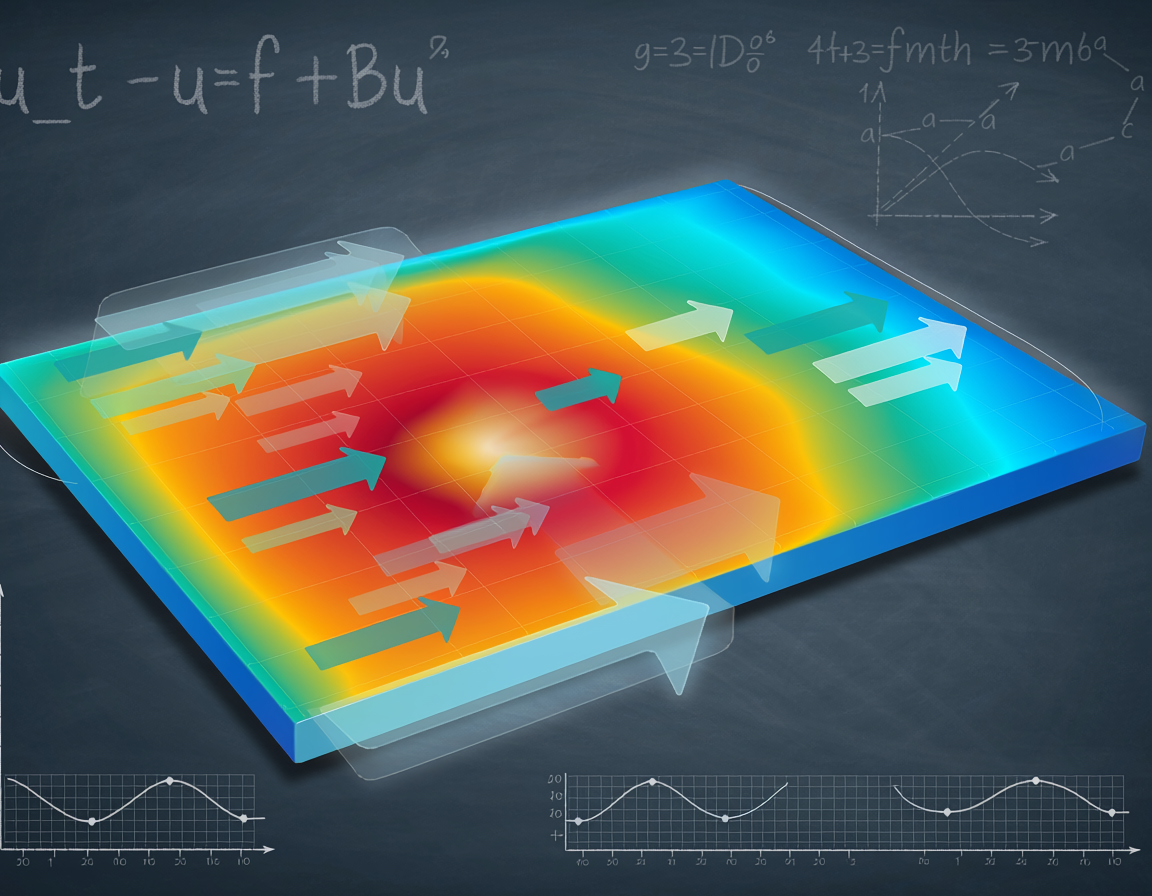

In many scientific computing problems the constraint takes the form of a partial differential equation

$$ A(u)x = f $$

which leads to the class of PDE-constrained optimization problems.

Adjoint Method

A common technique for computing gradients efficiently is the adjoint method. Instead of differentiating the PDE constraint directly, an adjoint equation is introduced.

This allows gradients of the objective functional to be computed with a cost comparable to solving the PDE itself.

Examples

Example of inline math appearing in optimal control: \( \nabla J(u) = \frac{\partial J}{\partial u} \)

Block equation example:

$$ \mathcal{L}(x,u,\lambda) = J(x,u) + \lambda^{T} c(x,u) $$

where ( \lambda ) represents the adjoint variable.